AnandTech Article Channel |

- IOGear Demonstrates HDMI Switching Solutions at CES 2013

- Swiftech and Steiger Dynamics: German Engineering Comes Home

- CES 2013: Cases and Cooling in the New Year

- Intel Brings Core Down to 7W, Introduces a New Power Rating to Get There: Y-Series SKUs Demystified

- Samsung's Exynos 5 Octa: Powered by PowerVR SGX 544MP3, not ARM's Mali

- ASUS $149 7-inch MeMO Pad Headed to the US, Powered by VIA SoC & Android 4.1

- Ambarella Announces A9 Camera SoC - Successor to the A7 in GoPro Hero 3 Black

- Brian's Concluding Thoughts on CES 2013 - The Pre-MWC Show

- WiLocity Bringing WiGig To Your Desk, Lap, Home and Office

| IOGear Demonstrates HDMI Switching Solutions at CES 2013 Posted: 14 Jan 2013 02:00 AM PST We visited IOGear's booth at CES and saw a variety of devices including HDMI switching solutions, I/O devices and other A/V gear. This post covers the HDMI switching solutions alone. There were two products which stood out in the demo. The first one was targeted towards home consumers. IOGear touts this as the first wireless streaming matrix for home use. It has 5 HDMI inputs and 2 HDMI outputs. One of the HDMI outputs is hardwired, while the second is wireless. A wireless receiver is bundled with the unit and can be placed up to 100 ft away (across walls). This wireless technology is based on WHDMI (5 GHz technology). The input for the wireless HDMI output can be configured from the second room. This device can also be used to clone HDMI outputs across two different locations. The device supports 3D over HDMI up to 1080p24. The device does blank out the relevant output while switching, but that shouldn't be a factor in home usage scenarios. The Wireless 5x2 HD matrix (GWHDMS52) will ship in Spring for $400. On the other hand, IOGear also has a rackmount 4x4 switcher meant for custom installers and the professional crowd. There is zero-delay switching without output blanking for this model. It can be controlled using the front panel, IR remote or RS-232 for professional applications. The AVIOR GHMS8044 is priced online around $700 (MSRP is $820).

IOGear also had some high capacity mobile battery chargers on display (up to 11000 mAh) and a Realtek-based WiDi / Miracast sink on display. The GWAVR WiDi / Miracast sink will debut at a MSRP of $80. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Swiftech and Steiger Dynamics: German Engineering Comes Home Posted: 13 Jan 2013 11:34 PM PST I don't know about you, but for me, the word "engineering" gets a lot more enticing when it's preceded by the word "German" (or the phrase "Commander LaForge, please report to.") Nanoxia, Swiftech, and Steiger Dynamics were all sharing a suite at CES this year, and while I've seen most of what Nanoxia has to offer with the Deep Silence 1, Swiftech and Steiger Dynamics were another thing entirely. My meeting with Swiftech was brief and focused predominately on their new H220 closed-loop cooler. While they've offered watercooling kits of all types for a long time now, the H220 is targeted squarely at the market being served by Corsair's H100i, Thermaltake's Big Water 2.0 Extreme, and NZXT's Kraken X60. The H220 really screams quality, though, and you can tell Swiftech has been in the watercooling game for some time when you start examining the details. Unlike most competing solutions, the H220's reservoir can be refilled, and the radiator uses brass tubing surrounded by copper fins (most closed-loop coolers sourced from Asetek or CoolIT rely on aluminum fins in the radiator). If you open the cooling loop (and Swiftech has designed the H220 for exactly that), the pump on the CPU waterblock is actually capable of handling the thermal load from an overclocked processor and two GeForce GTX 680s. Swiftech had four comparison systems on display to show just how much better the H220 was than Thermaltake and Corsair's solutions, with each system employing a 240mm cooler from each company and the fourth with the H220's cooling loop including two GTX 680s. The H220 was able to either perform roughly 5C or so better than the competitors at comparable or lower noise levels. While I'm skeptical about the comparison systems (no two i7-3770Ks overclock exactly the same), I'm still pretty confident the H220 will be a force to be reckoned with. Swiftech's H220 will be retailing with an MSRP of $139. Meanwhile, Ganesh was gracious enough to coordinate a meeting between me and freshly minted system integrator Steiger Dynamics. Steiger Dynamics has one product, but it's a doozy: the LEET. While the name may not excite you, the product ought to at least pique your interest. The LEET is essentially a custom desktop designed to be a media center, and specifically, a gaming machine. While comparable products like the DigitalStorm Bolt, the iBuyPower Revolt, and Alienware's X51 all look more like gaming consoles and were designed to see just how much power could be crammed within a specific envelope, the LEET goes in the opposite direction. Steiger Dynamics has produced something that looks like a home theater appliance, and within the enclosure is a custom liquid cooling loop (produced with Swiftech's aid, naturally) capable of supporting an Intel Sandy Bridge-E hexa-core Core i7 along with dual GeForce GTX 690s. Where this product proves itself, though, is in how silently it runs. While pushing the CPU and graphics hardware at full bore (we're talking Prime95 plus FurMark) will produce an audible increase in noise, in gaming the LEET is essentially silent. I tried Crysis 2 and Far Cry 3, both at their maximum 1080p settings, and I didn't hear a peep from the system on display. Steiger is still small, but they have a very attractive product. The price is going to put it out of reach for a lot of users (it starts at a not inconsiderable $1,798), but for those that can afford it, it's going to be a very impressive machine. This isn't something that can be easily built off of the shelf, and it shows. Expect to hear more from Steiger Dynamics in the future. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| CES 2013: Cases and Cooling in the New Year Posted: 13 Jan 2013 11:01 PM PST I'm pleased to report that this year's visit to CES bore promising fruit for the new year of desktop PC cases along with cooling and even desktop machines in general. While the way notebooks and tablets will shake out over the next couple of years is at least somewhat difficult to pin down, the chassis and PC cooling industries produced very clear trends. Much as when Jarred and I lauded the notebook industry for largely dispensing with glossy plastic (a practice HP has backslid on horribly with their G series and Pavilion notebooks), gaudy and ostentatious "gamer" cases are on the way out in favor of more staid and streamlined designs. Excepting Cougar's questionable Challenger case reviewed last year, most of the disconnect seems to stem from Taiwanese manufacturers and designers having a hard time trying to pin down western markets. That problem was largely absent from Rosewill, Thermaltake, and CoolerMaster's lines this year, though Enermax seems to be lagging behind. When I visited with Enermax, they were showing off a few cases, but a consistent problem nagged them: two USB 2.0 ports, one USB 3.0. I asked why they were doing this, and they said dual USB 3.0 ports actually drove the cost up a couple of dollars. This is pretty reminiscent of the same attitude that's burying the non-Apple notebook industry (especially in the face of tablets), a misunderstanding that while western consumers are tight, they're not that tight. Thankfully most of the rest of the case industry seems to have caught up with the step of progress. Thermaltake, once one of the biggest offenders, showed off their surprisingly elegant "Urban" series of silent enclosures destined to compete with NZXT's H2. Meanwhile, NZXT's new Phantom 630 is still on the ostentatious side, but only just so. The biggest news for cases is that case design as a whole has progressed. Space behind the motherboard tray, and specifically channels for cabling, are pretty much standard now. What I was happy to see is USB 3.0 proliferating down to the sub-$70 market, using internal headers, and fan controllers everywhere. 140mm fan mounts are also becoming increasingly common, and the majority of manufacturers are trying to produce cases that can support 240mm radiators like Corsair's H100i. Speaking of closed loop cooling, this is pretty much the big year for that technology to really take off. While NZXT and Corsair are still working off of designs that involve copper waterblocks and aluminum fins in the radiators, CoolerMaster's Eisberg line and Swiftech's new 240mm cooler all use copper fins in the radiators in addition to having beefier waterblocks and pumps. NZXT remains the only purveyor (extending from Asetek) of 140mm-derived radiators for the time being, but I don't expect that to last. Meanwhile, Zalman's liquid cooler doesn't use a conventional radiator at all, instead opting for a custom design reminiscent of their CPU heatsink designs. There were plenty of air coolers on display, too, but it's clear this is the direction things are going. Finally, a brief word about boutiques. The last two years have suggested the boutiques and system integrators are beginning to seriously diverge, diversifying from each other primarily through offering custom chassis and notebook modifications. This year it was made plain by iBuyPower's aggressive retail push with their wholly custom Revolt and DigitalStorm's revised Bolt and Aventum II. Every year someone proclaims the death of the desktop, and every year physics tells them what to go do with themselves. Powerful desktops and enthusiast machines are definitely getting smaller physically and more niche as a market, but desktops continue to offer the best longevity and bang for the buck of any personal computing platform. The PC gaming industry in particular has been tremendously revitalized, and while NVIDIA's GRID suggests a future of cloud gaming, it's still a ways off. In the meantime, 2013 should remain fairly bright for enthusiasts and do-it-yourself'ers. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

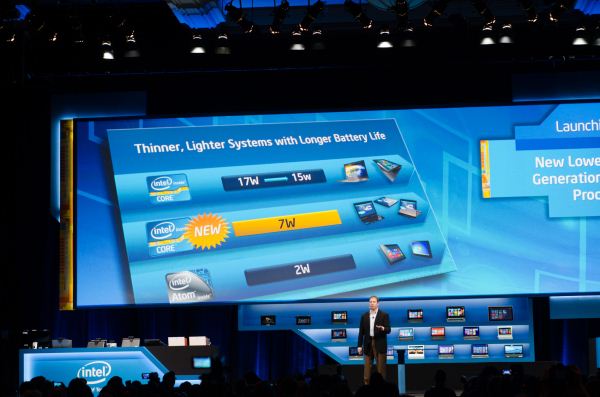

| Intel Brings Core Down to 7W, Introduces a New Power Rating to Get There: Y-Series SKUs Demystified Posted: 13 Jan 2013 08:56 PM PST For all of modern Intel history, it has specified a TDP rating for all of its silicon. The TDP rating is given at a specific max core temperature (Tj_MAX) so that OEM chassis designers know how big to make their cases and what sort of cooling is necessary. Generally speaking, anything above 50W ends up in some form of a desktop (or all-in-one) while TDPs below 50W can go into notebooks. Below ~5W you can go into a tablet (think iPad/Nexus 10), and below 2W you can go into a smartphone. These are rough guidelines, and there are obviously exceptions. With Haswell, Intel promised to deliver SKUs as low as 10W. That's not quite low enough to end up in an iPad, but it's clear where Intel is headed. In a brief statement at the end of last year, Intel announced that it would bring a small amount of 10W Ivy Bridge CPUs to market in advance of the Haswell launch. At IDF we got a teaser that Intel could hit 8W with Haswell, and given that both Haswell and Ivy Bridge are built at 22nm with relatively similar architectures it's not too far of a stretch to assume that Ivy Bridge could also hit a similar power target. Then came the CES announcement: Intel will deliver 7W Ivy Bridge SKUs starting this week. Then came the fine print: the 7W SKUs are rated at a 10W or 13W TDP, but 7W using Intel's Scenario Design Power (SDP) spec. Uh oh. Let's first look at the new lineup. The table below includes both the new Y-series SKUs as well as the best 17W U-series SKUs:

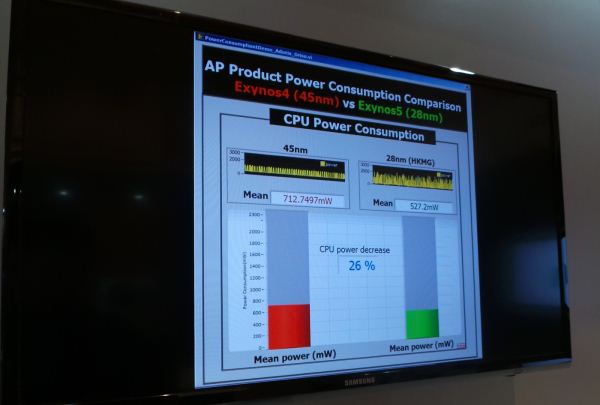

Compared to a similarly configured U-series part, moving to a Y-series/7W part usually costs you 200MHz in base clock, ~250MHz in max GPU clock, and 200 - 300MHz in max turbo frequency. Cache sizes, features and Hyper Threading are non-negotiable when going between U and Y. The lower clocks are likely the result of lower operating voltages and a side effect of the very low leakage binning. The cost of all of this? Around an extra $30 over a similar U-SKU. That doesn't sound like much but when you keep in mind that most competing ARM based SoCs sell for $30 themselves, it is a costly adder from an OEM's perspective. Now the debate. Intel should have undoubtedly been very specific about 7W being an SDP distinction, especially when the launch slide compared it to TDPs of other Intel parts. Of course Intel failed to do this, which brought on a lot of criticism. To understand how much of the criticism was warranted we need to first understand how Intel comes up with a processor's TDP and SDP ratings. Intel determines a processor's TDP by running a few dozen workloads on the product and measuring thermal dissipation/power consumption. These workloads include individual applications, multitasking workloads (CPU + GPU for example) and synthetic measures that are more closely related to power viruses (e.g. specifically try to switch as many transistors in parallel as possible). The processor's thermal behavior in all of these workloads ends up determining its TDP at a given clock speed. Scenario Design Power (SDP), on the other hand, is specific to Intel's Y-series SKUs. Here Intel takes a portion of a benchmark that stresses both the CPU and GPU (Intel wouldn't specify which one, my guess would be something 3DMark Vantage-like) and measures average power over a thermally significant period of time (like TDP, you're allowed to violate SDP so long as the average is within spec). Intel then compares its SDP rating to other, typical touch based workloads (think web browsing, email, gaming, video playback, multitasking, etc...) and makes sure that average power in those workloads is still below SDP. That's how a processor's SDP rating is born. If you run a power virus or any of the more stressful TDP workloads on a Y-series part, it will dissipate 10W/13W. However, a well designed tablet will thermally manage the CPU down to a 7W average otherwise you'd likely end up with a device that's too hot to hold. Intel's SDP ratings will only apply to Y-series parts, the rest of the product stack remains SDP-less. Although it debuted with Ivy Bridge, we will see the same SDP ratings applied to Haswell Y-series SKUs as well. Although Y-series parts will be used in tablets, there are going to be some ultra-thin Ultrabooks that use them as well. In a full blown notebook there's a much greater chance of a 7W SDP Ivy Bridge hitting 10W/13W, but once again the burden falls upon the OEM to properly manage thermals to deliver a good experience. The best comparison I can make is to the data we saw in our last power comparison article. Samsung's Exynos 5 Dual (5250) generally saw power consumption below 4W, but during an unusually heavy workload we saw it jump up to nearly 8W. While Samsung (and the rest of the ARM partners) don't publicly specify a TDP, going by Intel's definition 4W would be the SoC's SDP while 8W would be its TDP if our benchmarks were the only ones used to determine those values. Ultimately that's what matters most: how far Intel is away from being able to fit Core into an iPad or Nexus 10 style device. Assuming Intel will be able to get there with Ivy Bridge is a bit premature, and I'd probably say the same thing about Haswell. The move to 14nm should be good for up to a 30% reduction in power consumption, which could be what it takes. That's a fairly long time from now (Broadwell is looking like 2H-2014), and time during which ARM will continue to strengthen its position. Acer's W700 refresh, with 7W SDP Ivy Bridge in tow As for whether or not 7W SDP parts will actually be any cooler running than conventional 10W/13W SKUs, they should be. They will run at lower voltages and are binned to be the lowest leakage parts at their target clock speeds. Acer has already announced a successor to its W700 tablet based on 7W SDP Ivy Bridge with a 20% thinner and 20% lighter chassis. The cooler running CPU likely has a lot to do with that. Then there's the question of whether or not a 7W SDP (or a future 5W SDP Haswell/Broadwell) Core processor would still outperform ARM's Cortex A15. If Intel can keep clocks up, I don't see why not. Intel promised 5x the performance of Tegra 3 with a 7W SDP Ivy Bridge CPU. Cortex A15 should be good for around 50% better performance than Cortex A9 at similar frequencies, so there's still a decent gap to make up. At the end of the day, 7W SDP Ivy Bridge (and future parts) are good for the industry. Intel should have simply done a better (more transparent) job of introducing them. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Samsung's Exynos 5 Octa: Powered by PowerVR SGX 544MP3, not ARM's Mali Posted: 13 Jan 2013 05:09 PM PST At CES, Samsung announced its Exynos 5 Octa SoC featuring four ARM Cortex A7s and four ARM Cortex A15s. Unusually absent from the announcement was any mention of the Exynos 5 Octa's GPU configuration. Given that the Exynos 5 Dual featured an ARM Mali-T604 GPU, we only assumed that the 4/8-core version would do the same. Based on multiple sources, we're now fairly confident in reporting that the with the Exynos 5 Octa Samsung included a PowerVR SGX 544MP3 GPU running at up to 533MHz. The PowerVR SGX 544 is a lot like the 543 used in Apple's A5/A5X, however with the addition of DirectX 10 class texturing hardware and 2x faster triangle setup. There are no changes to the unified shader ALU count. Taking into account the very aggressive max GPU frequency, peak graphics performance of the Exynos 5 Octa should be between Apple's A5X and the A6X (assuming Samsung's memory interface is just as efficient as Apple's):

It's good to see continued focus on GPU performance by the major SoC vendors, although I'd like to see a device ship with something faster than Apple's highest end iPad. At the show we heard that we might see this happen in the form of an announcement in 2013, with a shipping device in 2014.

Gallery: Vizio Tablets and Smartphones | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

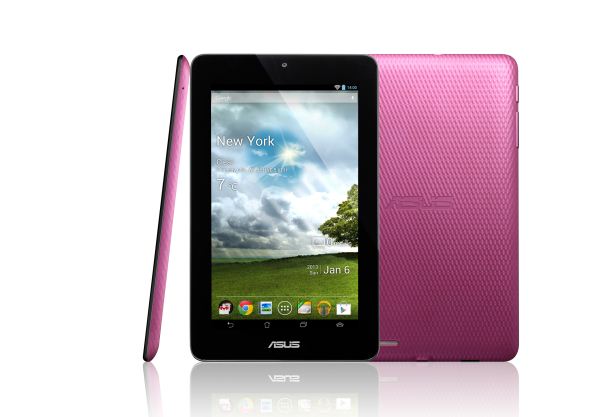

| ASUS $149 7-inch MeMO Pad Headed to the US, Powered by VIA SoC & Android 4.1 Posted: 13 Jan 2013 05:03 PM PST Just after we got back from CES, ASUS wrote to tell us that a cost reduced version of the 7-inch MeMO Pad was coming to the US. Starting at $149 for an 8GB model, the MeMO Pad features a VIA WM8950 SoC. We haven't seen the VIA name in a while but inside the SoC is a single ARM Cortex A9 CPU running at up to 1GHz and an ARM Mali-400 of unknown core configuration. The total DRAM size hasn't gone down compared to the Nexus 7, but display resolution has (1024 x 600 vs. 1280 x 800). ASUS is promising up to 140-degree max viewing angle and 350 nits max brightness for the MeMO Pad's 7-inch display. Unlike the Nexus 7, the MeMO Pad does come with a microSD card slot to expand storage beyond its default 8/16GB configuration. The chassis looks very similar to the Nexus 7, but it is slightly thicker and does weigh a little more as well. Battery capacity hasn't been touched though. There's a 1MP front facing camera with f/2.0 lens. The MeMO Pad will ship with Android 4.1. ASUS was quick to point out that the device will ship with Google's Play Store and the US version will support Hulu Plus, Netflix and HBO Go.

The cost reduction in the bill of materials translates to $50 savings in retail; the MeMO Pad will start at $149 compared to $199 for the Nexus 7. While I bet the latter will still be the 7-inch Android tablet of choice for discerning consumers, the MeMO Pad will be ASUS' attempt to gain a foothold in the ultra competitive, low cost tablet market. Availability is expected in the US starting in April.

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

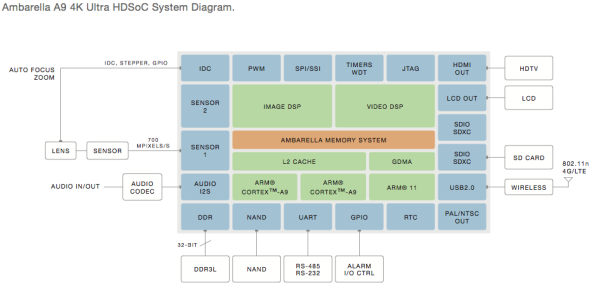

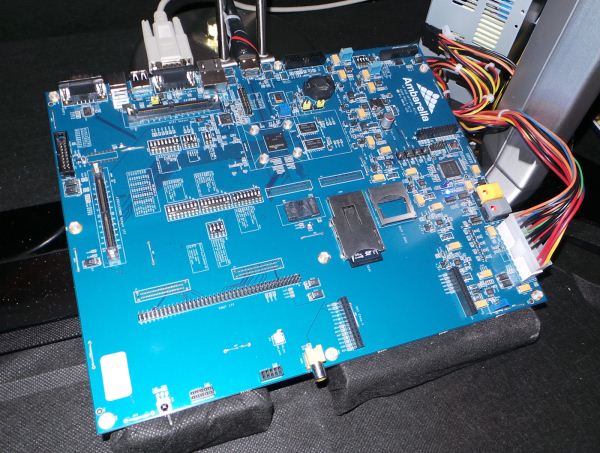

| Ambarella Announces A9 Camera SoC - Successor to the A7 in GoPro Hero 3 Black Posted: 13 Jan 2013 03:48 PM PST I've been playing around with and trying to review the GoPro Hero 3 Black since the holidays, a small sports-oriented portable camera which can record up to 4K15 video or 1080p60 video with impressive quality. Inside the GoPro Hero 3 Black is an Ambarella A7 camera system on chip, while the Hero 3 White and Silver contain the previous generation Ambarella A5S. Both the A5S and A7 are built on Samsung's 45nm CMOS process. During CES Ambarella announced their successor to the A7, the A9 (neither of which is to be confused with ARM's Cortex A7 or A9 CPU). This new camera SoC moves to Samsung's 32nm HK-MG process and brings both lower power consumption for the same workloads and the ability to record 4K30 video as well as 1080p120 or 720p240, double the framerate of the previous generation thanks to higher performance. The A9 is the direct successor to the A7 and enables 4K video capture with enough framerate (30FPS) for playback without judder, the previous generation's 4K 15 FPS capture pretty much limited it to use for recording high resolution timelapses or other similar scenes that would be played back at increased speed. The A9 SoC also includes two ARM Cortex A9s onboard at up to 1.0 GHz, up from the single ARM11 at 528 MHz from the previous generation. Ambarella claims under 1 watt of power consumption for encoding a 1080p60 video on the A9 and under 2 watts for 4K30 capture. The A9 also has built-in support for HDR video capture by combining two frames - thus 1080p120 becomes 1080p60 with HDR enabled. I got a chance to take a look at both HDR video capture on the A9 as well as 4K30 video encoded on a development board and decoded using the same board on a Sony 4K TV. I came away very impressed with the resulting video quality, clearly the age of 4K/UHD is upon us with the availability of inexpensive encoders like these that will make their way into small form factor cameras. I wouldn't be surprised to see a GoPro Hero 3 Black successor with the A9 SoC inside and those higher framerate capture modes fully enabled at some point in the very near future since the A9 SoC is now available to customers. Source: Ambarella | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Brian's Concluding Thoughts on CES 2013 - The Pre-MWC Show Posted: 13 Jan 2013 03:26 PM PST Anand asked each of us to write up some final thoughts on CES 2013, something which is honestly a daunting task at best, and a potentially controversial or rage-inducing one at worst. Having now attended three CESes, I still have a relatively small window of reference within to gauge this one, but some things like CES need almost no context. First, CES is and hopefully always will be a spectacle. I actually disagree with many who say that Las Vegas shouldn't be the venue for CES. That's because there's something appropriate about Las Vegas being the home to CES, since it's a city and environment I approach with as much skepticism and trepidation as the products and announcements made during the show. Almost everything you see isn't what it seems, and I've recounted a few analogies in person that I think bear repeating here. Just like the showiest buildings and people usually have the least to offer (and thus rely entirely on show and presentation to draw you in), so too do exhibitors and companies and everyone giving you their exactingly rehearsed pitch. That is to say good products and announcements draw their own crowds and don't need overselling with dramatic entrances and expensive demos. Some of the most engaging and busy companies I met with had almost no presence on the show floor, and instead had only a tiny meeting room with a single table and a few chairs. Similarly just like hotels on the strip appear close and within walking distance (signs can be read almost two miles away), so too should one approach the release dates for things announced at CES — they're almost always further away than they appear. Finally Vegas itself is an carefully engineered, computationally optimized environment designed to extract maximum dollar from anyone it entwines, and so too the CEA crafts (and engineers) a show that will be crowded regardless of the number of attendees, and record breaking in scale regardless of whether there's actually any real growth going on. I guess what I'm saying is that there is a certain kind of skepticism one has to approach everything CES related with, and in that sense the only appropriate context for gauging CES is itself, as a subset of Las Vegas. I don't think you could ever have a CES detached from that environment, and especially not without the pervasive, eye-stinging smell of the casino floors, which is a world-unique combination of cigar smoke, cigarette smoke, spilled drinks, sweat, hooker spit, and the despair of a thousand souls and crushed dreams. No, a CES detached from Vegas wouldn't be a CES at all. So what are my concluding thoughts about this CES? I've heard lots of grumbling from a few of my peers that CES is increasingly irrelevant as a place to look for mobile news, but I think that's false. They're talking about handset announcements and superficial things one can get a hands-on with and take pictures of rather than actual mobile news. From a superficial mobile handset perspective, sure, CES is increasingly not the place to go; it's too close to MWC, and too many OEMs now want to throw their own events to announce specific handsets or just do it at the mobile show rather than get lost in the noise that is CES. Unless you're a case manufacturer there really isn't any reason to go launch your major handset at CES unless it is going to launch very early in the new year's product cycle. There were only a few major mobile handset announcements — Intel, ZTE, Huawei, and Sony announced new phones. Samsung dropped a token handset announcement or two in at the end, but nothing flagship at all. On the other hand, there was actually plenty of mobile related news that wasn't handset related. You just have to know what to look for. Heck, the keynote was by Qualcomm CEO Paul Jacobs instead of Microsoft's finest. What clearer indication does one need that we're entering a mobile era where desktop takes a back seat? There was plenty of mobile news as far as I'm concerned. Qualcomm finally announced MSM8974, the part which is essentially the MSM8960 successor on Qualcomm's roadmap, and a revamped version of APQ8064 with Krait 300 CPU inside. They also rolled out new Snapdragon branding which completely casts away Qualcomm's previous S-series and has me yet again fielding questions from everyone about what part now fits under what arbitrarily drawn umbrella in the 200 / 400 / 600 / 800 series (spoiler: Even I don't know how the existing lineup maps to the new numbering, just that the part I will keep calling MSM8974 is an 800 series and that APQ8064T is a 600 series). NVIDIA formally announced its Tegra 4 SoC the night ahead of the CES rush, and alongside it, Project Shield, its mobile gaming platform slash PC gaming accessory. In addition Nvidia announced its Icera i500 LTE platform and made some interesting promises about future upgradeability. Similarly Samsung announced Exynos 5 Octa, (an absolutely unforgivably atrocious name for an SoC that will expose at most 4 cores at once to the OS, and with 5410 not 5810 as its part number). Samsung also showed some curved displays and example designs I got a chance to look at, before telling me they regretted ever letting me into their showroom and meeting area. Broadcom also teased, but somehow didn't actually formally announce, its LTE baseband that has been rumored and rumbled about for months. We saw the first PowerVR Series6 GPU, a two cluster, running on LG's silicon that's inside some of its smart TVs. I met with Allwinner who talked about their A31 and A20 SoCs that are getting a lot of attention, in perhaps the event's smallest meeting room. Audience also announced their eS515 noise cancelation and sound processor, the first to implement a three-mic algorithm (others with three switch between pairs). Amazingly T-Mobile also lit up AMR-WB (adaptive multi rate wideband) for higher fidelity voice calls across the whole network right during the show. In short, there's a ton of mobile news, it just isn't mobile handsets or something necessarily tangible. I guess my takeaway is that CES's niche in the mobile space isn't to be the place for handsets you can put your hands on, but rather for as a place to lay the groundwork for that to happen at MWC. Essentially companies announce or tease hardware for what's going to show up at the next show in specific devices, and I get that this is what most people are after, but if you're looking for CES to be that venue you're doing things very, very wrong. Anyhow, the above is really what CES seems like to me, with lots of announcements about things that we will shortly see in actual devices announced at that next show. Wireless charging was also a huge theme for my CES — somehow I met with every wireless charging standards body and a few charge transmission and receive chip suppliers in the same day. The differences between resonant and magnetic inductance based wireless charging systems are now burned into my head. The operators and device makers are testing the waters with just a few devices to see how consumers react, and the future of wireless charging hinges on their adoption. The real winners of CES in my mind are two things — the Samsung Galaxy Camera, and Oculus Rift. Samsung Galaxy Camera seemed to be everywhere. Even though the device had nothing to do with CES, I couldn't help but notice that everyone seemed to have one — journalists, analysts, attendees, exhibitors, you name it. People who were clearly sampled a review unit and others that simply went out and bought the device to try it out. I found myself pulling out my DSLR less and less and using the Galaxy Camera more and more for video, simple photos, and whenever I had it handy. Obviously the Galaxy Camera can't match even the most entry-level DSLR, but there's something immediately more powerful about a camera that's uploading photos and videos to Dropbox so you'll have them ready, or the ability to immediately tweet and post something interesting. Connected camera is going to become even more of a critical topic in the coming year, and I've never ever had a device elicit so many questions and curious glances as the Galaxy Camera in my time reviewing devices. Say what you want about the imaging experience or battery life, but Samsung did an excellent job nailing most of the core fundamentals and UX right out of the gate with Galaxy Camera. That's something the Nikon S800c and Polaroid are still a ways away from. Oculus Rift I'm going to write a lot about in another CES related pipeline post, but their demos and pre-development platform hardware are already so much better than any shipping VR kit out there that it's embarrassing. The tracking speed and fluidity are scarily good, and the experience already so close to being phenomenally good that everyone describes the experience as "going in" and "coming out" rather than "putting the goggles on" or "taking them off." That isn't to say that things are perfect, but the development kit that's coming will be nothing short of impressive. Finally, I have to hand it to the wireless operators who managed to keep their LTE networks up and working during the entire show. I fully expected at least one of the major operators to die entirely, and this is perhaps the first year that I haven't had massive issues doing simple things like sending SMSes or placing calls while being anywhere near LVCC. The numerous DAS (Distributed Antenna Systems) in the LVCC and major hotels did their job and worked well. Things weren't flawless or necessarily the fastest I've seen them but it isn't like connectivity died entirely. CES 2013 drew to a close almost as quickly as it started from my perspective, and I can't help but admit that I already miss the insane pace of it all. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

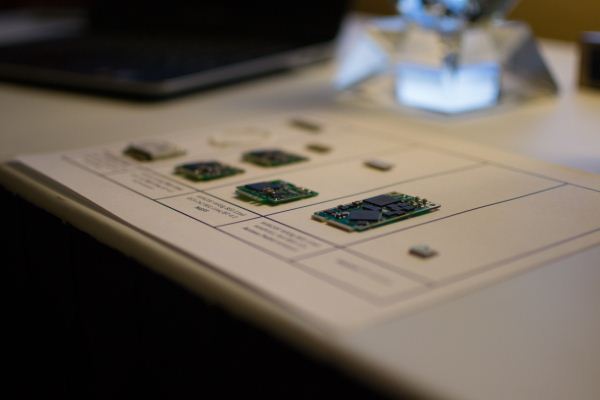

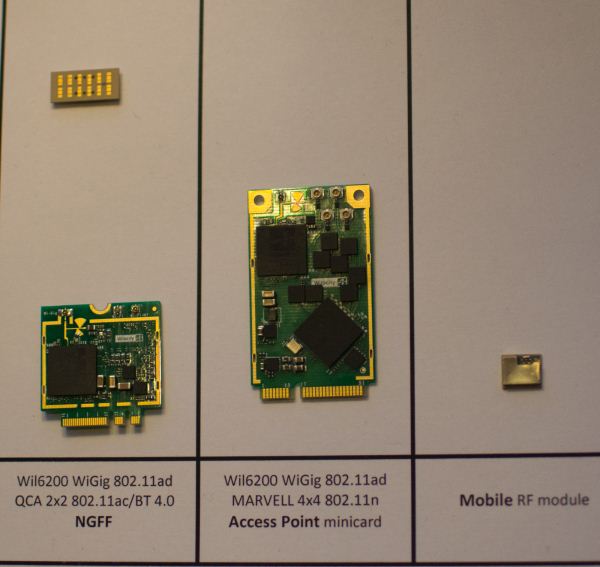

| WiLocity Bringing WiGig To Your Desk, Lap, Home and Office Posted: 12 Jan 2013 06:44 PM PST We had a chance to meet with WiLocity to take a look at their progress in bringing WiGig to market. Let’s start with a primer. WiGig (802.11ad) is an air interface that operates in the 60GHz range, providing massive bandwidth and some keen tech to eliminate crowding issues that are seen in 2.4GHz protocols. At such a high frequency, though, propagation is rather limited. So, though some provisions for bouncing signals around a corner are made, this is meant primarily as a line of sight interface. So what can you accomplish with WiGig? Let’s look at the demos. WiLocity has fleshed out two routes for using WiGig, a networking protocol and a bus replacement. With a WiGig module installed in your device (tablet/PC at present) you can utilize multiple peripherals connected to a WiGig enabled dock. Your computer will detect these peripherals as connected through a PCI Express bus, and with so much bandwidth available, no penalty is paid versus having the peripheral connected to a wired PCI Express lane. The low latency lends itself to video and gaming applications. This is truly a wire replacement solution, and though it is limited to in-room experience, it’s still better than dragging cables around. The networking solution WiGig has developed is impressive, though its current state is better suited to the office than the home. Businesses that handle large files are often at the mercy of wired connections to rapidly move files between users. Though WiFi speeds are improving, and 802.11ac is finally starting to trickle out, maximum throughput is still achieved on GigE connections alone. Configuring a WiGig network over a bank of cubicles, though, would allow each client to receive greater than GigE speeds with just a few access points, and without interfering with other wireless products and devices. WiLocity’s solution uses 802.11ac as a fall back and when operating beyond line of sight, and does so seamlessly. That need for line of sight limits its appeal to closed environments, such as in the home, but as a value add, having an 802.11ac access point that also provides WiGig throughput in the same room as the router, there could be applications for media centers and home offices. In addition to the demos, WiLocity showed off its recently updated module (built in partnership with Qualcomm Atheros), now upgraded from 802.11n to ac, and featuring a new, smaller RF module that has 32 individual elements arranged in an almost omnidirectional fashion to improve performance. Marvell also has a new access point module, with 4x4 802.11n. Products featuring these module should be released this year, and the road map for the next few years continues this trend of improving service while shrinking the module size down further. Ultimately they would like to see a component small enough to be embedded in a handset. They expect the first WiGig equipped handsets to premiere in 2014, with mass production in 2015. For now, we’ll be on the look out for any WiGig devices that are ready to be run through their paces. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| You are subscribed to email updates from AnandTech To stop receiving these emails, you may unsubscribe now. | Email delivery powered by Google |

| Google Inc., 20 West Kinzie, Chicago IL USA 60610 | |

No comments:

Post a Comment